By: Emmitt Miller

Computer graphics have changed enormously since the earliest days of computing. What started as simple dots and lines on primitive screens has grown into detailed digital worlds that can look almost identical to real life. Today, graphics power video games, animated films, virtual reality, architecture visualizations, and even medical research. The evolution of graphics is closely tied to the growth of computing power and human creativity. As computers became faster and more capable, artists, programmers, and engineers found new ways to turn raw processing power into images that are more immersive, realistic, and expressive.

The first computer graphics appeared in the mid-20th century when computers were enormous machines used mainly by scientists and universities. Early displays were extremely limited and could only show basic shapes or lines on a monochrome screen. One major milestone was the program Sketchpad, created in 1962 by computer scientist Ivan Sutherland. Sketchpad allowed users to draw shapes directly on a screen using a light pen, which was revolutionary at the time. Instead of simply printing numbers or text, computers could now display interactive visuals. In those early years, many systems used vector graphics, where lines were drawn between specific points rather than forming images from pixels. While simple by modern standards, these systems introduced the idea that computers could be used as visual tools rather than just calculating machines.

As technology improved during the 1970s and 1980s, raster graphics began replacing vector displays. Raster graphics use a grid of tiny pixels to build images, which allowed for more complex visuals and color displays. This change helped launch the early video game industry and made graphics accessible to everyday computer users. Early arcade games showed how engaging even simple visuals could be. Players controlled paddles, spaceships, or characters made of only a few shapes, yet the games felt exciting and interactive. Home computers soon followed, bringing colorful graphics into people’s homes. Although the resolution and color palettes were very limited, these systems introduced sprites, scrolling backgrounds, and basic animations that made digital worlds feel alive.

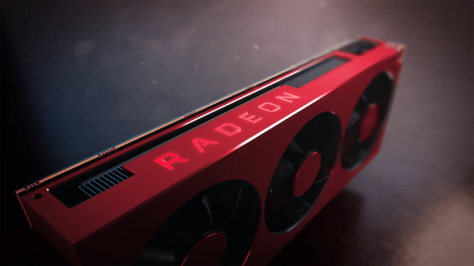

The 1990s marked one of the most important turning points in graphics history with the rise of real-time 3D graphics. Instead of flat images, developers began building environments using polygons that formed three-dimensional objects. Games became more immersive as players could explore spaces that appeared to have depth and perspective. At the same time, specialized graphics hardware began appearing in personal computers. These graphics processing units, or GPUs, were designed specifically to handle the complex math needed to render images quickly. Companies such as NVIDIA and ATI helped push this technology forward, making it possible for home computers to display smoother animations, detailed textures, and more complex lighting effects. For the first time, digital environments started to feel like believable spaces rather than simple pictures on a screen.

During the 2000s and 2010s, graphics technology advanced at an incredible pace. GPUs became extremely powerful, allowing developers to simulate realistic lighting, shadows, reflections, and physical effects. Techniques such as shader programming and high dynamic range lighting made digital scenes richer and more detailed. Computer graphics also expanded far beyond video games. The film industry began using advanced computer-generated imagery to create entire characters and environments that blended seamlessly with live action. Architects used rendering software to visualize buildings before construction, while scientists used graphics to explore complex data and simulations.

One of the most exciting recent developments has been the introduction of real-time ray tracing. Ray tracing simulates how light behaves in the real world, calculating how rays bounce off surfaces, pass through glass, and reflect in mirrors. In the past, this technique required enormous computing power and could take hours to render a single image. Today, modern graphics cards can perform these calculations instantly, creating reflections and lighting that look far more natural and realistic than older techniques.

Looking toward the future, graphics technology will likely continue evolving in surprising ways. Artificial intelligence is already starting to assist in creating images, textures, and animations. AI tools can upscale lower-resolution graphics, fill in missing details, and even generate entire environments. Another major area of development is virtual reality and augmented reality, which aim to place users directly inside digital worlds.These technologies require extremely fast and realistic graphics to feel convincing, pushing hardware and software to new limits.

Researchers are also exploring new approaches such as neural rendering, where machine learning models help generate images instead of traditional rendering pipelines. This could dramatically reduce the computing power needed to create realistic scenes. Combined with advances in cloud computing and future hardware, these technologies could allow massive shared virtual environments or simulations that are far more detailed than anything possible today.

From the earliest glowing lines on a black screen to the breathtaking digital worlds we see today, the evolution of computer graphics has been driven by curiosity, creativity, and technological progress. Each new breakthrough has expanded what artists and developers can create, turning computers into powerful tools for storytelling and exploration. As new technologies continue to develop, the graphics of the future will likely become even more immersive, intelligent, and visually stunning than we can currently imagine.